There is a remarkable theorem in mathematical logic that “Truth is not definable”. This is known as Tarski’s Undefinability Theorem. This result (along with Gödel’s Incompleteness Theorems) has fascinated me ever since I learned about it. This theorem (in a sense) shows us the limits of the ability to study truth within a given formal system.

Formulas and Truth

In a previous post, I briefly discussed first order logic and arithmetic. To review: formulas are built up as follows:

- equations of arithmetic terms (involving addition and multiplication) are formulas (example:

)

- inequalities of arithmetic terms are formulas (example:

)

- conjunctions, disjunctions, and negations of (smaller) formulas are formulas

- quantifications of formulas are formulas (examples:

and

). Recall that

(the existential quantifier) means “there exists” and

(the universal quantifier) means “for all”. In the context of natural numbers, this “there exists x…” means “there is some natural number x…”, and “for all y…” means “for every natural number y…”

It does not make sense to talk about whether or not a particular formula is “true”. For example: is true? It’s certainly either true or false when we plug in specific numbers for

and

. But until we do so, it does not have a truth value.

The formula does have a truth value. It is true: there really is an

, namely

, so that the formula

is true. The main difference between the formulas

and

is that the former formula has free variables (x and y), while the variables in the latter formula are bound by quantifiers. Formulas are usually denoted with Greek letters like

, etc.; if a formula has free variables, we usually specify that:

would be a formula with a single free variable,

would be a formula with two free variables, etc. If

is a formula, then

refers to the formula obtained from

by plugging in the number 2 for x, wherever x appears in the formula.

Formulas without free variables are called statements — statements have truth values. Truth values for statements are defined inductively, similar to how formulas are defined.

Given a formula , we obtain the set that it defines:

, the set of all those numbers

such that

is true. Some sets of numbers are easily seen to be definable: the set of even numbers, for example, is the set

. Other definable sets include the set of odd numbers, the set of prime numbers, the set of perfect squares, …

Arithmetization

The surprising thing about arithmetic is actually just how much is definable. While it may appear, at first, that all definable sets are based on number-theoretic properties (e.g. even numbers, odd numbers, numbers that are divisible by 23, prime numbers, composite numbers, etc), the structure of definable sets is much richer than that.

For example: finite sequences of numbers can be represented by a single number using coding. The idea of this is very simple: every number has a unique prime factorization, and so, letting be the (infinite) sequence of prime numbers (in order, so

), we let the number

code the sequence

. (The details are a bit more complicated than this, but they are not incredibly important.) Then, there is a formula

which is true if and only if

is the

number in the sequence coded by

.

This ability to code finite sequences is extremely powerful. For the purposes of this post, I will focus on how we can use this to represent first-order logic within arithmetic itself. Recall: formulas are certain finite sequences of the following symbols: , along with a countably infinite set of variables

. We can code these symbols as numbers in the following way: let 0 represent 0, 1 represent 1, 2 represent

, 3 represent

, etc. Then, since a formula is a finite sequence of these symbols, the Gödel code of a formula is the number which represents that finite sequence. In fact, there is an effective process to go between a formula

and its Gödel code

. Not every number codes a formula, but the set of all numbers which code some formula is in fact definable. Further, the set of all numbers which code a statement is also definable.

That is: there is a formula such that

is true if and only if the sequence that is coded by

represents an actual formula. For example, using the coding I started to outline above, the sequence

represents

, which is not a formula (it is an expression), while the sequence

represents the formula

.

So to sum up: there is a single formula which recognizes whether or not any finite sequence of symbols constitutes an actual formula. This means that arithmetic provides a viable background theory in which to study logical arguments. That is: we can study logic, itself, within arithmetic, by studying codes of formulas. So we can, for example, study logical proofs, since proofs are finite sequences of statements satisfying some (definable) conditions (assuming that the set of codes of axioms you are using is definable, which it is for the axioms traditionally used in arithmetic). But interestingly, this also means we can do “metalogic” inside arithmetic — that is, we can formalize statements about logical statements.

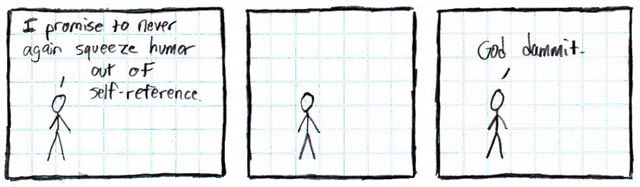

Self-reference

We are getting eerily close to the famous “Liar’s Paradox”. Consider the assertion (in natural language) “This sentence is false.” If we accept it as true, then it must actually be false, and vice versa — a contradiction either way, and so there is no coherent way to assign a truth value to that assertion. Of course in classical logic, a well formed statement must have a truth value (in the natural numbers), and so that must mean that this kind of self-reference isn’t allowed in logic, right?

Not exactly. Given any formula , there is a “self-referential” statement

which is true if and only if

is true (this result is known alternatively as the “Diagonal Lemma” or “Fixed Point Lemma”). That is,

asserts that

has the property defined by

. So for example, if

is the formula that says “There is no proof from the axioms of arithmetic of the formula coded by

“, then

would be true if and only if there is no proof of it! In other words,

is, essentially, the assertion “This sentence is not provable.” (This result is well-known as one of Gödel’s incompleteness theorems.)

So if self-reference isn’t the problem with formalizing the Liar’s Paradox, what exactly is that problem? “This sentence is not provable” can be formalized– but “This sentence is false” cannot? The issue must be with the notion of truth itself. This is precisely what Tarski’s Undefinability Theorem states: there is no formula such that, for any statement

,

is true if and only if

is true.

The proof is simple, given the “Diagonal Lemma”: suppose defines truth. That is, it expresses “The statement coded by x is true”. Then its negation should express “The statement coded by x is false.” Apply the Diagonal Lemma and obtain a self-referential statement

, which would express “This statement is false.” Then

is true if and only if it is false, which is a contradiction. Therefore there can be no formula

!

I discussed this theorem in my Computability course last semester, with a more computability-theoretic proof. I hope to describe that proof in a future post.